The potential of AI is advancing frequently, and firms must adopt it to stay on top. As software becomes increasingly complicated & frequent updates are coming out, traditional testing methodologies sometimes fail. Manual testing is slow, and it usually fails to work on critical edge cases. It causes delays, performance gaps, and bug production, which later hamper user experience.

AI testing services are aimed at pointing out manual testing challenges. The AI experts automate the testing process and ensure the software is frequently verified with every update. The AI testing team generates test cases and runs them in different environments to validate the product’s performance. The AI-powered testing market is expected to hike by 2034 at USD 4.64 billion.

The AI-powered solutions combine innovative AI testing services with human intelligence to ensure speed, quality & accuracy. The AI-powered solutions frequently monitor & analyze defects, optimize test strategies, and offer real-time insights. It empowers your team to solely focus on innovation.

Firms can unleash the potential of automation to offer robust & data-driven results. Your AI solutions can deliver 5X faster time to market when they are properly tested. It empowers businesses to serve quality, unmatched reliability, scalability, and user experience. With the increasing AI adoption, staying current demands more than implementation.

To keep your business future-ready with AI, read the following blog where we have mentioned various AI testing types offered by an AI testing services provider.

What Is AI Testing

🢣 Definition of AI Testing

AI-powered testing goes beyond traditional methods by serving features such as self-healing capabilities, predictive analytics & no-code test automation. The AI-powered advanced testing tool leverages AI to automate test creation, maintenance & execution. It makes software testing reliable & efficient. The AI testing companies support team manages test maintenance, which is time-consuming in the automated testing process.

AI testing companies can minimize testing cycle time by up to 50%, allow faster release, and minimize QA by 50-70%. AI testing involves generating test cases, identifying flaky tests and broken scripts, and prioritizing tests & high-risk areas based on code changes or user behavior. AI testing is used to enhance test coverage, limiting the manual effort that supports remaining tests effectively.

How AI Testing Differs from Traditional Software Testing

❑ Non-deterministic outputs

Because of statistical models, AI systems can generate diverse answers for the same input, which complicates validation and necessitates assertions based on tolerance rather than predetermined outcomes.

❑ Continuous learning models

AI models, in contrast to static software, develop through continuous training, necessitating constant testing, monitoring, and validation. It guarantees performance consistency and stops behavior changes over time.

❑ Data dependency

AI models, in contrast to static software, develop through continuous training, necessitating constant testing, monitoring, and validation to guarantee performance consistency and stop inadvertent behavior changes over time.

❑ Model drift and retraining cycles

Data validation, bias detection, and preprocessing checks are crucial components of the testing lifecycle since AI performance is highly dependent on data quality, diversity, and labelling accuracy.

Also Read: How to Choose the Right AI Testing Tools for Your Business?

Core Components of AI Systems That Require Testing

➥ Training datasets

To guarantee reliable model training and to prevent biased predictions or unjust results in real-world situations, datasets must be evaluated for quality, completeness, bias, and accuracy.

➥ Machine learning models

In order to ensure that models function dependably under various circumstances and edge situations, they must be validated for accuracy, robustness, and scalability over a variety of inputs.

➥ Data pipelines

To guarantee that data flows smoothly from collection to processing without loss, corruption, or transformation mistakes, data pipelines must be validated for integrity, consistency, and dependability.

➥ APIs and integrations

This is an essential part of AI systems, and testing these components guarantees accurate, safe, and smooth data transfer between all linked systems. Any API malfunction has the potential to impair model performance, produce inaccurate forecasts, or result in system outages. Validating JSON/XML, authentication procedures, and error handling are the main goals of API testing.

➥ User interaction layers

To guarantee that users obtain relevant, intelligible, and consistent replies in a variety of interaction settings, interfaces must be evaluated for usability, responsiveness, and accuracy of AI outputs.

Why AI Testing Is Critical for AI-Powered Platforms

⟹ Ensuring Model Accuracy

By verifying predictions against actual situations, lowering mistakes, and enhancing overall system efficacy across a variety of datasets and use cases. The AI testing company guarantees models provide accurate, consistent, and pertinent outputs.

⟹ Preventing Bias and Ethical Issues

By identifying biased data patterns, ensuring fairness, and validating ethical AI behavior, working with an AI testing service provider reduces the likelihood of prejudice and encourages responsible, open decision-making.

⟹ Maintaining Reliability and Performance

AI testing assists in confirming that models function well in a variety of workloads, data sizes, and real-world situations. Particularly in real-time applications like chatbots, recommendation engines, or fraud detection systems, it guarantees that reaction times stay at their best. Gaps in data processing and system integrations are also found through performance testing.

⟹ Regulatory Compliance

By verifying data utilization, model choices, and system transparency, proper AI testing lowers legal risks and guarantees adherence to changing international standards while also assuring compliance with industry rules and data protection legislation.

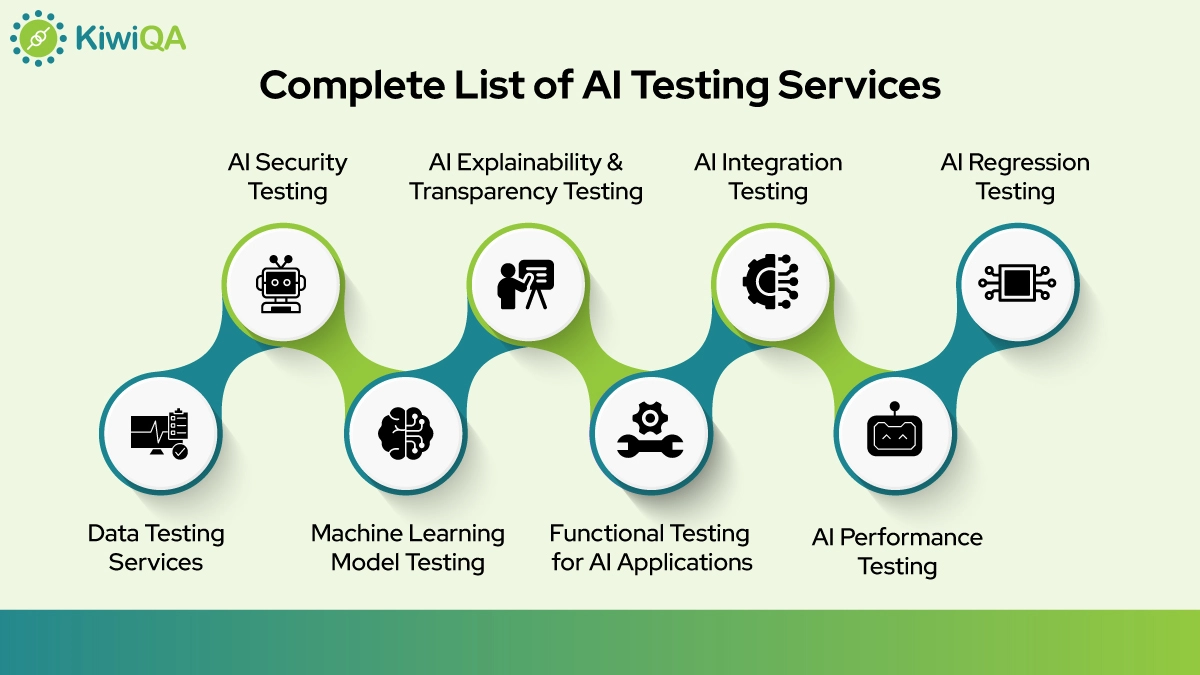

Complete List of AI Testing Services

☛ Data Testing Services

The datasets’ correctness used in AI systems is guaranteed by data AI testing services. They involve verifying the quality of the data by finding abnormalities, missing values, and inconsistencies that can have an impact on model performance. In order to guarantee that all necessary inputs are available and accessible, these services often incorporate data completeness checks.

Validating data labels guarantees accurate and consistent annotations, which are essential for supervised learning. Furthermore, bias identification guarantees results are fair, and data drift detection assists in identifying changes in data patterns over time. Reliable and trustworthy AI systems are supported by dataset version control validation, which preserves consistency throughout development cycles.

For supervised learning models, data labelling validation verifies the consistency of annotations. Furthermore, data drift detection assists in identifying shifts in data patterns over time that may affect the quality of the model. To find any unfair or imbalanced data distributions that might provide discriminatory results, bias detection is carried out.

By ensuring that teams are using the most recent and accurate versions of datasets, dataset version control validation helps to maintain consistency throughout development cycles. All things considered, by guaranteeing the integrity of the underlying data, data testing services are essential to developing reliable and high-performing AI systems.

☛ Machine Learning Model Testing

The goal of machine learning model testing is to verify the resilience, performance, and dependability of AI models prior to their implementation. This procedure guarantees that models produce precise and reliable forecasts for a range of situations and datasets. While precision and recall analysis offer deeper insights into classification performance, particularly in skewed datasets, model accuracy testing assesses how well the model performs against known outcomes. Confusion matrix validation provides a thorough knowledge of model behavior by visualizing true positives, false positives, true negatives, and false negatives.

By training and testing the model on several data subsets, cross-validation testing evaluates the ability of the model to generate new data. Testing for model explainability guarantees that the model’s conclusions are clear and understandable, which is essential for compliance. The agentic AI testing services also assess the model’s reaction to manipulated or unexpected inputs in order to find any weaknesses. When combined, these testing techniques help guarantee that machine learning models are trustworthy, safe, and prepared for practical use.

☛ Functional Testing for AI Applications

When integrating AI-driven components, functional testing for AI applications guarantees that all system functions and workflows function as intended. Functional validation is more difficult since AI systems, in contrast to traditional applications, must manage dynamic inputs and provide outputs that could differ. This kind of testing confirms that AI replies, business logic, and user interactions match specified criteria and anticipated behavior.

It involves verifying that AI-powered features like recommendations, predictions, or automated tasks operate successfully within the application, testing input-output flows, and ensuring that APIs handle data appropriately. In order to ensure that the system manages edge situations and uncommon inputs without malfunctioning, they are also tested.

A functional AI testing services also ensures smooth platform integration by examining how AI components interact with other modules. To provide a seamless user experience, error management, backup plans, and response consistency are also assessed. Businesses may make sure that their systems not only operate technically but also produce useful, dependable, and user-friendly results in real-world circumstances by extensively verifying the operation of AI applications.

☛ AI Performance Testing

The goal of a performance AI testing services is to assess how well and consistently AI systems function under various circumstances and workloads. It guarantees that models maintain accuracy and consistency while producing results in a reasonable amount of time. When processing massive amounts of data or managing several user requests at once, this kind of testing looks at response time, throughput, scalability, and resource consumption.

Additionally, performance testing evaluates how AI models function in real-time settings where delays might have a detrimental effect on user experience, such as chatbots, recommendation engines, or predictive systems. To ascertain system limitations and spot any bottlenecks or malfunctions under harsh circumstances, stress testing is carried out.

Furthermore, load AI testing services guarantees that the system can manage anticipated user traffic without experiencing performance deterioration. Monitoring tools are frequently used to track performance parameters over time and identify problems early. AI performance testing also assesses the impact of data volume and model complexity on system efficiency and processing speed. Organizations can make sure their AI-powered systems continue to be quick, scalable, and dependable, producing consistent results even as demand and data quantities increase, by carrying out thorough performance testing.

☛ AI Security Testing

In order to defend AI systems from malevolent assaults, data breaches, and unauthorized access, AI security testing focuses on finding and fixing vulnerabilities inside AI systems. AI models are vulnerable to threats like adversarial attacks, data corruption, and model inversion since they rely so largely on data and advanced algorithms. This kind of testing by an AI testing services provider assesses the system’s resistance to altered inputs intended to deceive. It also entails evaluating data security protocols to guarantee that private data is appropriately encrypted and safeguarded during transmission and storage.

Access control mechanisms are validated to prevent unauthorized users from interacting with the model or underlying datasets. Additionally, the API security AI testing services ensures that external connections don’t cause gaps. AI security testing also examines how models handle unexpected or harmful inputs, ensuring safe and controlled outputs.

Frequent monitoring & error monitoring strategies are implemented to identify risks in real time. By conducting comprehensive AI security testing, organizations can safeguard their systems, maintain user trust, and ensure compliance with data protection and cybersecurity standards.

Also Read: Top Accessibility Testing Tools Should Use in 2026 to Improve Customer Experience

☛ AI Explainability & Transparency Testing

AI explainability and transparency testing guarantee that machine learning models generate outputs that stakeholders and consumers can comprehend, analyze, and rely on. It is crucial to provide a clear explanation of how models arrive at particular predictions as AI systems are employed more and more in key decision-making. This test determines if methods like feature importance, decision trees, and model-agnostic tools can be used to analyze the reasoning behind model decisions. Additionally, it guarantees consistent and traceable results, enabling teams to audit choices as necessary.

Transparency testing by an AI testing services provider confirms that consumers are aware of and comprehend the limits of AI systems. By offering insightful explanations in addition to forecasts, it also determines whether the system avoids “black box” behavior. This procedure also supports the discovery of latent prejudices, guaranteeing just and moral results. Organizations may increase responsibility, foster trust, and adhere to regulatory requirements that demand clarity in automated decision-making processes by increasing interpretability and transparency.

☛ AI Integration Testing

The goal of AI integration testing is to make sure that AI components interact with databases, APIs, third-party services, and user interfaces in a smooth manner. It is crucial to verify how well AI systems interact with the whole software ecosystem because they seldom function in isolation.

By verifying that inputs are appropriately processed and outputs are accurately distributed across systems, this testing confirms that data flows across components. Additionally, it verifies answer consistency, data interchange formats, and API functioning when including AI-driven services like chatbots, recommendations, and predictive analytics.

Integration testing assesses the effectiveness of the system’s error-handling procedures and fallback systems. Additionally, it looks at compatibility with various platforms, devices, and surroundings. Another crucial factor is performance during integration, which ensures that the speed and dependability of the system are not adversely affected by the inclusion of AI components. Organizations may guarantee seamless operations, enhanced user experience, and dependable end-to-end system functionality by carrying out comprehensive AI integration testing.

☛ AI Regression Testing

Regression testing for AI ensures that changes or upgrades to machine learning models don’t adversely affect current functionality or performance. It is crucial to confirm that previously functional features continue to function as intended since AI models change over time due to fresh data and algorithm advancements. In order to identify any unintentional modifications or accuracy erosion, this testing compares the outputs of the present model with those of earlier iterations. In order to ensure balanced model performance, it also assesses if advancements in one area have led to problems in another.

Validating important metrics, including accuracy, precision, recall, and consistency across several datasets, is part of regression testing. This procedure is frequently streamlined by the use of automated testing frameworks, particularly in continuous development settings. It also guarantees that dependent systems, integration points, and APIs continue to operate properly following upgrades. Even while models are regularly developed over time, AI regression testing helps preserve system stability, dependability, and user confidence by spotting problems early.

☛ Continuous AI Testing (MLOps Testing)

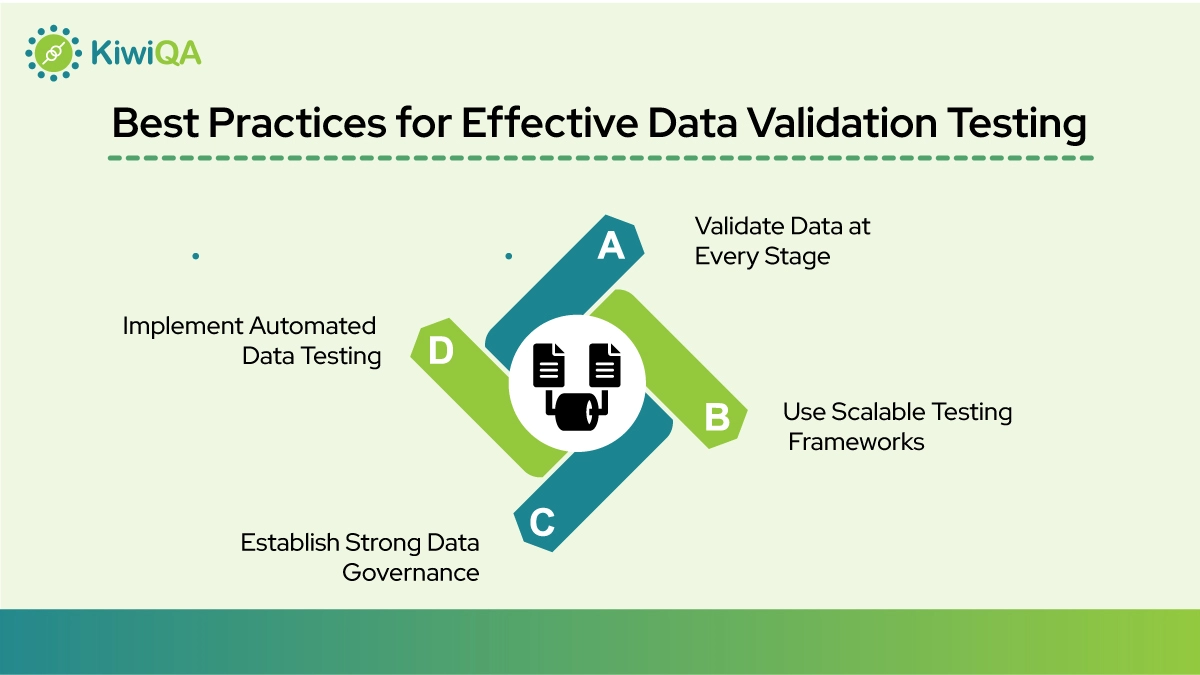

Continuous AI system testing services, also known as MLOps testing, are the process of continuously validating AI systems at every stage of their development, deployment, and beyond. In contrast to traditional testing, this method incorporates testing CI/CD, allowing for quick response and real-time monitoring. As fresh data is added, it guarantees that models stay current, accurate, and dependable. Automated validation of data quality, model performance, and system behavior at every level is part of continuous testing.

Additionally, an AI testing services provider keeps an eye out for performance decline, model drift, and data drift in production settings. When problems are found, alerts and feedback loops are utilized to initiate updates. It also guarantees that new model versions don’t interfere with current systems and that deployment procedures go smoothly. A crucial component of MLOps testing is cooperation between teams. Organizations can maintain robust, scalable, and high-performing AI systems that successfully adjust to evolving data and business needs by using continuous AI testing.

Ready to Ensure the Quality and Reliability of Your AI-Powered Platform?

AI system testing services aren’t a requirement but a necessity for improving quality. If your vision is to automate stable web flows, manage readable tests, & keep QA predictable under CI/CD pressure, choose the AI testing team. The upcoming era of software testing will depend on AI-human collaboration. While AI accelerates & optimizes testing, human expertise remains necessary for strategic thinking, creativity, and decision-making. Businesses that successfully integrate AI into their testing witness better efficiency, enhanced test coverage & faster release.

Your AI system can fail when there is a glitch in testing. The strong AI testing services by an AI testing service provider makes a structured approach. The team verifies data quality prior to model training & document performance over the production baseline. The best AI testing company combines AI-powered verification with real-time testing on live devices. With them, businesses can make a 50% faster release cycle and scale their business without QA overhead.